September 15, 2016

The cover

The personal computer, posits Robert Cringely, was the product of "people who find creativity so effortless that invention becomes like breathing or who have something to prove to the world."

They are the people who are left unchallenged by the simple routine of making a living and surviving in the world and are capable, instead, of first imagining and then making a living from whole new worlds they've created in the computer.

Even when the computer in question exists only on the cover of a magazine. Because of deadlines, the actual Altair computer gracing the cover of that famous issue of Popular Electronics was a mockup, not a working model. When the photograph was taken, a working production model wasn't available to demo.

That didn't matter. For Bill Gates, "enlightenment came in a flash."

Walking across Harvard Yard while Paul Allen waved in his face the January 1975 issue of Popular Electronics announcing the Altair 8800 microcomputer from MITS, they both saw instantly that there would really be a personal computer industry and that the industry would need programming languages. Although there were no microcomputer software companies yet, 19-year-old Bill's first concern was that they were already too late. "We realized that the revolution might happen without us," Gates said. "After we saw that article, there was no question of where our life would focus."

The difference this single-minded focus made on the future is apparent in the interviews with Bill Gates and Gary Kildall in the first (Feb/Mar) and third (Jun/Jul) issues of PC Magazine. (The first three issues are bound together into a single volume; you can find Kildall's by searching on his name.)

Gates comes across as hyper-aware of the emerging digital zeitgeist, the needs of his client (IBM) and the geek culture that spawned the then-nascent PC industry. But he is also thinking past all of them to all the ordinary consumers out there who just wanted a tool, an appliance. They were the future.

"A computer," Gates boldly promised in Microsoft's mission statement, "on every desk and in every home all running Microsoft software."

Kildall, by contrast, is very much the tenured professor he was before founding Digital Research. He's not quite sure what the rush is all about (a big reason the hard-pressed Boca Raton IBM team quickly turned to Microsoft to supply an operating system for the IBM PC).

Kildall gets animated about the then-arcane subject (a decade premature) of "concurrency" (multitasking) and proudly points to the assembly language compiler and debugger that ships with CP/M and CP/M-86. "So you can just pick up CP/M-86 and start developing your own high-performance applications."

Well, um, no. The vast majority of us can't, and neither could most of the geeks and nerds excited about the new, affordable "personal computer."

Okay, I used Kildall's debugger to hack the screen display and printer buffer in the CP/M version of WordStar so it'd run correctly on my Kaypro II. That was pretty much the beginning and the end of the life as a developer of "high-performance applications" using machine code.

In his interview, Gates instead enthuses about BASIC. BASIC is literally about as basic as a programming language gets. BASIC compilers were even a thing for a while, because ordinary computer enthusiasts (like me) could understand BASIC well enough to write working code.

Microsoft BASIC was initially the only reason to buy an Altair or an IBM PC. Microsoft Corporation was created to sell BASIC for the Altair, and the IBM PC shipped with Microsoft BASIC in ROM. The importance of BASIC (and a smattering of assembly language) is reflected in the early issues of PC Magazine.

"The Microsoft Touch" in the September 1983 issue of PC Magazine nicely ties BASIC to the beginnings of Microsoft.

But even in the premier issue, the emphasis was on the up and coming commercial apps—in particular, the spreadsheet and word processor—not programming languages. The VisiCalc spreadsheet made the Apple II the first "office PC," and Lotus 1-2-3 would do the same for the IBM PC.

Though Kildall was right for a time. Because of the enormous cost of memory and the constraints on bus and CPU speeds, DOS programs like WordPerfect (up to version 5) were written in assembly language. But it took thousands of employees to develop and market WordPerfect 5.

So Gates was being amazingly prophetic when he predicted in 1982 that in the future,

We'll be able to write big fat programs. We can let them run somewhat inefficiently because there will be so much horsepower that just sits there. The real focus won't be who can cram it down it, or who can do it in the machine language. It will be who can define the right end-user interface and properly integrate the main packages.

But I don't think Gates could have imagined then just how much of the technological world 30 years hence would run on high-level interpreted code, or that hardly anybody would notice or complain because the hardware had gotten so fast and so inexpensive. (Well, I notice on my old Windows XP laptop.)

In 2015, Apple produced a watch with orders of magnitude more memory and a CPU a hundred times faster that cost a tenth as much as the original IBM PC. Though, frankly, a creaky old IBM XT running Lotus 1-2-3 and WordPerfect 4.2 would be a lot more useful.

Productivity. That's why the PC changed everything.

Related posts

The future that wasn't

The accidental standard

MS-DOS at 30

The grandfathers of DOS

Digital_Man/Digital_World

Labels: business, computers, tech history, technology

September 08, 2016

The grandfathers of DOS

|

| Courtesy PC Magazine, June/July 1982. |

One of the tech pioneers who navigated the rocky transitional period was Gary Kildall (1942–1994). Kildall's CP/M operating system played a key role in shifting the software paradigm from the mainframe and minicomputer to the personal computer.

Kildall came a generation after Ken Olsen, half a generation before Gates, Wozniak, and Jobs. Olsen served in WWII. Kildall was a graduate student at the University of Washington when he was drafted into the Vietnam War. He would spend his enlistment teaching computer science at the Naval Postgraduate School.

He later became a tenured professor at NPS while consulting in Silicon Valley. In the early 1970s, he started work on CP/M, an 8-bit operating system designed to power the new microcomputers that ordinary people could afford.

Like the Altair, the 8080-based PC kit that Ed Roberts was building in Albuquerque, world-of-mouth ignited a tidal wave of interest and curiosity in the burgeoning "home brew" computer community. Kildall retired from teaching and together with his wife started Digital Research to develop and market CP/M.

By 1978, the company (headquartered in their house in Pacific Grove, California) had achieved sales of $100,000 a month.

Along with CP/M, two more Kildall innovations made the PC possible. On the technical side, the BIOS chip created a hardware "abstraction" layer that allowed an operating system to work "out of the box" with various hardware configurations without being hand-tuned for the particularities of each one.

On the business side, with the BIOS chip in hand, Digital Research could divorce the OS from dependency on a single hardware platform or manufacturer and sell CP/M to all comers, a marketing model that Microsoft would follow with great success.

The Apple I had debuted in 1976, built on "that horrible MOS Technology 6502 processor," as Kildall described it. But CP/M remained the dominate general-purpose microcomputer OS, running on the 8-bit Intel 8080 and Zilog Z-80. For a time, Microsoft sold the Z-80 SoftCard that enabled CP/M to run on the Apple II.

The SoftCard was Microsoft's number one revenue source in 1980, making Microsoft a major CP/M vendor. And was probably the reason IBM thought Microsoft was also an OS developer.

During the late 1970s, Kildall got distracted customizing the PL/I compiler for Intel CPUs. Development of CP/M languished for almost two years.

Apple released the Apple III in 1980. It was plagued by reliability problems, a lack of software, and like the later Lisa, carried a "sky-high" price. On sabbatical at the time, Steve Wozniak returned to Apple in order to supervise production of the highly successful Apple IIe. Apple regained its footing in 1983.

But in 1981, the microcomputer industry was without a technological leader. In August of that year, IBM changed everything with its 16-bit Intel 8088-based PC.

In Triumph of the Nerds, Jack Sams recounts how his IBM team, in desperate need of an operating system for the IBM PC, approached Digital Research (on the recommendation of Bill Gates) but couldn't get anybody to sign the strict non-disclosure agreement or agree to their tight production schedule (accounts differ).

The second time IBM raised the issue with Microsoft, Bill Gates signed in a heartbeat. Gates didn't care about the licensing terms as long as they were non-exclusive and Microsoft could sell MS-DOS to other hardware manufacturers. IBM agreed and Microsoft changed the world.

Except Microsoft didn't have an OS in development. So it licensed 86-DOS from Seattle Computer Products for a song and hired the guy who designed it, Tim Paterson.

Kildall later protested that Tim Paterson hadn't reversed-engineered CP/M but had copied his source code. He never pursued this claim. (When Compaq later reversed-engineered the IBM BIOS, it documented every step with legal precision and was never sued by the litigious IBM.)

Paterson, employee number 80 at Microsoft, remembers his historic role with something of a philosophical shrug.

It's been pooh-poohed as Seattle Computer being suckers or something for taking the deal because it made Microsoft so much money. I don't know how many people would have said the guy who provides the operating system to IBM is going to make it rich. I have the impression Bill Gates and Paul Allen felt it was a gamble, not that they were sitting on a gold mine and knew it.

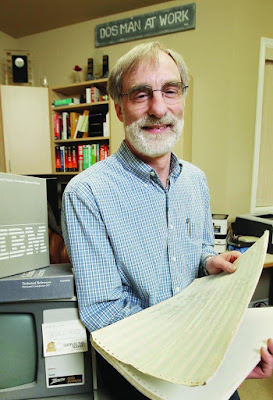

|

| Tim Paterson |

In any case, Kildall's 16-bit version of CP/M for the PC didn't come out until April 1982, and then initially at six times the price of MS-DOS. Alas, it wouldn't be competitive at any price. The computing world finally had a software and a hardware standard and was sticking with it.

These latter details don't make it into Kildall's memoir, which concludes at the end of the 1970s. Or at least the version we have. Kildall never published the manuscript. The first half was recently made available as a free PDF.

Titled Computer Connections: People, Places, and Events in the Evolution of the Personal Computer Industry, Kildall writes with a readable style, not overburdened with technical jargon (although there is plenty of that). It's a compelling personal account about the roots of the PC operating system.

The theme of his recollections might be: "You kids don't know how tough we old-timers had it!" He says this with a wink and a nod, but he's absolutely right. Compared to the hoops programmers once had to jump through, the floppy disk drive and the command line were absolutely amazing steps forward in usefulness.

Kildall's account ends at the end of the 1970s, before the stormy advent of the IBM PC (read the rest of the story here). But he does mention two other times he and Bill Gates crossed paths. The first sounds like a script straight out of Hollywood.

When Kildall was at the University of Washington, two high school students hacked into C-Cubed, a time-sharing computer company run by the director of the UW Computer Center. The kids were none other than Bill Gates and Paul Allen, the future founders of Microsoft. And what happened to them? C-Cubed hired them.

A decade later, a fledgling Microsoft was based in Albuquerque, creating software tools for the Altair. In 1977, Gates came to Pacific Grove to discuss the future of the business with Kildall, who was then running the world's most successful microcomputer software company.

Kildall remembers them getting along like oil and water, "his manner too abrasive and deterministic, although he mostly carried a smile through a discussion of any sort." Kildall had no desire to "compete with his customers," and turn Digital Research into a one-stop that sold both tools and applications.

Exactly what Gates was planning to do. Recalled Kildall,

We talked of merging our companies in the Pacific Grove area. Our conversations were friendly, but for some reason, I have always felt uneasy around Bill. I always kept one hand on my wallet, and the other on my program listings. It was no different that day.

So Microsoft ended up back in Seattle, where Gates and Allen grew up.

Kildall didn't think highly of Gates as a computer scientist. But in all fairness, I'll point to this landmark interview by Dave Bunnell in the debut issue of PC Magazine. As early as 1982, a young Bill Gates demonstrated a remarkably insightful grasp of where the personal computer industry was headed.

Gary Kildall may not have liked the man he ended up passing the baton to, but there's no denying that Bill Gates grabbed it and ran like a bat out of hell.

Related posts

Computer Connections

The accidental standard

MS-DOS at 30

Digital Man/Digital World

The cover

Labels: business, computers, history, tech history, technology

September 01, 2016

Digital Man/Digital World

Before Intel, before Microsoft, before Apple, before the IBM PC and Compaq Computer (the company that later acquired it), there was Digital Equipment Corporation. The new reality that a computer could be "small enough to be stolen" (based on an actual incident) began not in Silicon Valley but in Maynard, Massachusetts.

Digital Equipment Corporation (DEC) was founded by Ken Olsen in 1957.

Ken Olsen had served in the Navy during WWII as a radar technician. After the war, he earned a degree in electrical engineering at MIT, where he worked on the Whirlwind project. The Whirlwind computers powered the prototypes of the Distant Early Warning (DEW) Line during the early years of the Cold War.

Olsen had acted as a liaison with IBM during the Whirlwind project (IBM build the computers that MIT designed), and recalled that IBM was "like going to a communist state." He brought that attitude with him when he founded DEC.

Although a conservative Christian who wore a tie, banned alcohol from company parties, and always ate dinner with his family (before going back to work), Olsen was one of the first "hippie" CEOs. He championed the flat corporate hierarchy, the employee-friendly workplace, and the customer-oriented business.

He drove a sedan and vacationed in the Canadian wilderness. Abandoning the organizational chart, he managed by walking around, and was on a first-name basis with his employees. He actively recruited women and minorities (this was in the 1960s and 1970s) and didn't lay off a single person until 1988.

In committee meetings, managers were expected to own their ideas, defend them, and fight things out: "You could get in somebody's face as long as you didn't stab them in the back."

DEC was the first high-tech company funded by venture capital (American Research and Development Corporation) and produced a crop of multi-millionaires when it went public in 1966. After Ken Olsen retired (involuntarily), he gave away most of his accumulated wealth.

DEC's truly disruptive innovation was the minicomputer. Instead of IBM's room-sized mainframes, the PDP-1 was a filing-cabinet sized time-sharing computer that cost less than $120,000, a bargain back in the early 1960s. The later PDP-8, introduced in 1965, shaved more than $100,000 off that price.

The 16-bit PDP-11 was the first computer to tie internal communications together on a shared bus, a feature later adopted by the Altair, the Apple II and the IBM PC. The 32-bit, network-ready VAX debuted in 1977 and became its most popular minicomputer, a mainstay of university engineering labs.

|

| The PDP-11 control console (top) looks like a "computer." Built for computer geeks, the Altair front panel resembled the PDP-11 console. |

By the early 1980s, Digital had become the second-largest computer company in the world. In one of the great ironies that typify the last half-century of the tech industry, while disrupting the staid mainframe business and making computers truly affordable, DEC sowed the seeds of its own downfall.

This trend in affordability accelerated in the 1970s with the advent of a slew of inexpensive 8-bit CPUs that powered the Altair, the Apple II, and the Commodore, with the Intel and Zilog varieties running the soon ubiquitous CP/M operating system.

IBM responded to the PC threat with the 16-bit IBM PC, engineered in its freewheeling Boca Raton division using low-cost OEM components and a second-hand operating system from an upstart software company called Microsoft. It produced a smash hit product that eventually sowed the seeds of its own downfall too.

|

| By comparison, the IBM PC looks like an appliance. |

DEC went in the opposite direction, sinking resources into the VAX 9000 supercomputer, high-end multiprocessor microcomputers, and the Alpha 64-bit RISC processor. This was a decade before 64-bit computing would arrive in an affordable PC package. Everybody loved the Alpha but nobody knew what to do with it.

Powered by Intel's inexpensive x86 chips, the PC was growing so fast that "good enough" quickly became more than enough to do the job. Before long, personal computers were easily matching the power of DEC's previous minicomputers. The PC had turned into a minicomputer.

In a complete turnaround (just as Clayton Christensen would have predicted), DEC found itself defending the high end of the market and getting disrupted from below.

DEC's premium hardware failed to find a market, resulting in a $2.8 billion loss in 1992. That year, Olsen was ousted as CEO. Compaq acquired Digital in June 1998, only to merge with HP four years later. DEC all but disappeared amidst the corporate reorganization rubble.

Though largely forgotten, its influence lives on.

When Paul Allen and Bill Gates developed a BASIC interpreter for the brand-new Altair in 1975, they didn't have an Altair computer. Instead, they used an Intel 8008 emulator Allen had written for the DEC PDP-10 in Harvard's Aiken Lab. Amazingly, the program ran the first time it was installed on an actual Altair.

BASIC was Microsoft's founding product, its first best-selling product, and would later be adopted by both IBM and Apple. And it was born on a DEC minicomputer.

Then in 1988, Microsoft hired Dave Cutler, architect of the VMS operating system for the DEC VAX, to design a preemptive multitasking OS. Since the release of Windows XP, all desktop and server versions of the Microsoft OS (plus Windows Phone since version 8) have been built on Dave Cutler's NT kernel.

Our modern technological world was in no small part created by Ken Olsen and Digital Equipment Corporation.

Related posts

The accidental standard

MS-DOS at 30

The grandfathers of DOS

The future that wasn't

Something Ventured

Labels: computers, tech history, technology